Writing excerpt from thesis research, instructed by Anthony Vidler and Anna Bokov.

Abstract

If cultural criticism in the 1970s targeted machine productivity and consumerism, then its 21st-century counterpart addresses these issues with an added focus on digital technology. At the core of digital computation is optimization; to achieve the comprehensive understanding we desire, algorithms must identify patterns from a vast pool of samples and then produce and assess variations in an effort to reach optimal outcomes. These complex operations in human-machine communication prompt us to examine not just the results, but also the medium itself. An art of abstraction, digital computation communicates through data that represent empirical conditions as numerical functions.

Through sensing, collecting, and processing information on a planetary scale, our understandings of human behavior, urban functionality, and global energy dynamics have been shaped by digital data. Every aspect of life embodies datafication. This series of annotated montages reflects on moments where datafication manifests and transforms lives. These transformations can be physical or philosophical, occurring at personal, urban, or global scales.

Abstractions explain nothing, they themselves have to be explained: there are no such things as universals, there’s nothing transcendent, no Unity, subject (or object), Reason; there are only processes, sometimes unifying, subjectifying, rationalizing, but just processes all the same.

The Physics of Data

The desire to understand the environment on a global scale drives scientific advancement, resulting in technologies like remote sensing for weather monitoring and forecasting. Yet, the immense scale and the abstract nature of methods involved in its operation ensure that the outcomes will often feel alienating to human perception. If climate change anxiety arises from the experience of strange weather, then data-driven climate policies are founded on very different grounds. While data captured by sensors follow systematic rules and protocols, the application of data is never neutral. Data tends to favor the quantifiable, which does not validate human experiences. It favors seamlessness, yet the translation from data to knowledge invariably involves physicality that creates friction.

Amplitude

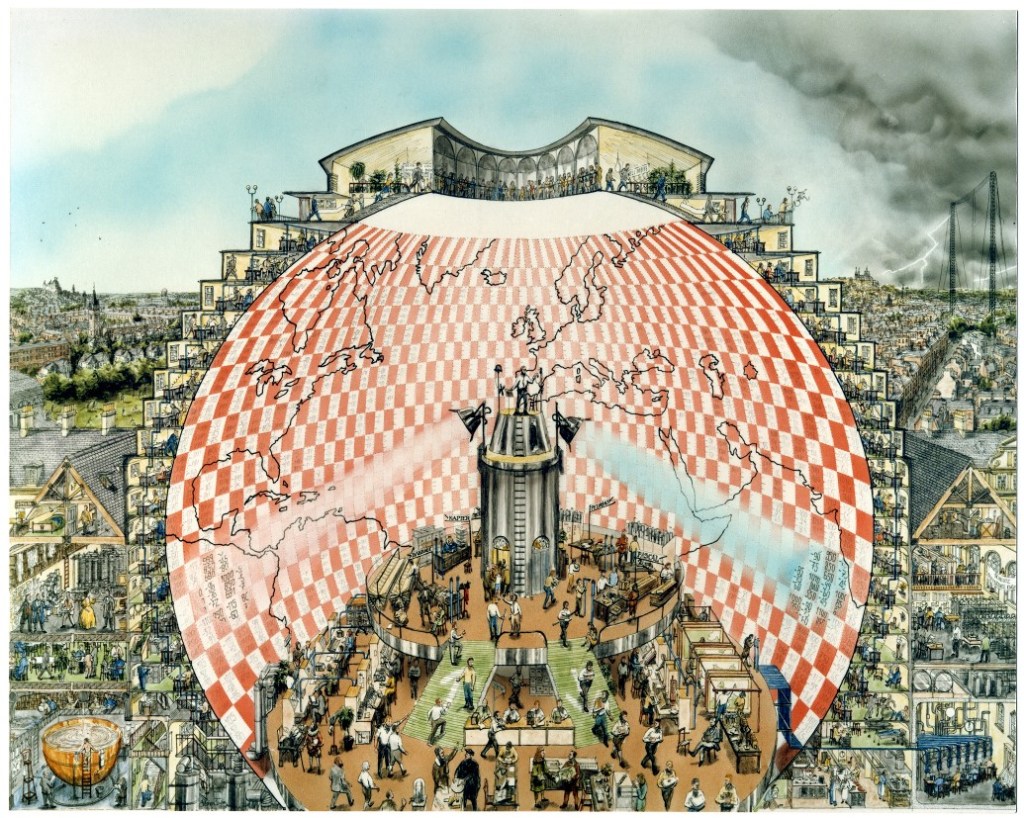

One of the early experiments on weather forecasts, conducted by English mathematician Lewis Fry Richardson, offers an insight on the amplitude of planetary data. In 1922, Richardson published Weather Prediction by Numerical Process, in which he “devised a method of solving the mathematical equations that describe atmospheric flow.” This marked one of the earliest formal attempts to apply mathematical equations and numerical data to weather prediction. Richardson began with an experiment to develop a mathematical model based on historical weather data. His method employs a “finite-difference grid”, dividing the area into cells along latitude/longitude lines, and specifying the dynamical variables at the center of each cell. The grid also extends from ground to aerial, up to about 12 km, with a total of 5 layers. Richardson’s model divides the atmosphere into a grid, quantifying weather through meteorological variables such as temperature, pressure, and wind. Based on data collected by the weather balloons at each grid point over short time intervals, Richardson formulated a series of mathematical equations designed to generate outputs aligned with a “retrospective forecast.” Given the vast amount of data, his method also sought to simplify calculations to basic arithmetic operations. The computation framework, aimed to produce a 6-hour forecast, ultimately took 6 weeks to complete.

Richardson documented several challenges encountered in the experiment, including inconsistencies in data collection due to operational constraints, the complexity of mathematically representing atmospheric conditions, and the volume of calculations required. The burden of labor, in particular, led Richardson to envision what he called the “forecast-factory.”

Fig. 1 “Weather Forecasting Factory” by Stephen Conlin, 1986.

The conceptual model of the “forecast-factory” has left a significant legacy. In A Vast Machine, Paul N. Edwards draws a parallel between the operation of the “forecast-factory” and computing, where he describes the similarity as “a coordinated human activity that harmonizes machines, equations, people, data, and communication systems in a frenetic ballet of numerical transformation.” Highlighting the human and material dimension, the “forecast-factory” represents the practical reality of computing.

Materiality

With the notion of “data are things,” Edwards expands on the material dimension of computation, suggesting that data modeling and processing are not just abstract exercises but deeply embedded in physical infrastructures — machines, energy consumption, and human labor. “Data friction” refers to the resistance encountered when transforming raw observations into processed, usable data, which requires substantial physical and organizational resources. Edwards emphasizes that such friction shapes the accuracy, efficiency, and even biases of computation models, making material constraints an unavoidable component of scientific practice. While information is often regarded in its abstract form, it is ultimately also a material artifact. Data — whether printed on paper or stored on servers — requires tangible resources, from the hardware to the energy required to run processing systems. This concept, which frames data as both an informational and physical entity, underscores the material reality of the infrastructure supporting data operations — a critical factor in the evolution of computation.

Model and Interface

The process of data manipulation begins with raw data, which is collected and analyzed using data models often powered by complex algorithms to derive meaningful information. This processed information is then presented through interfaces — primarily visual representations on screens — that enable users to interact with and interpret the data. These interfaces serve as critical points of engagement, bridging the gap between abstract data and tacit knowledge.

Representation

Our encounters with data primarily occur through digital interfaces, where information is structured and represented visually. Interfaces act as the medium through which we access and interpret data, translating abstract information into forms we can understand and interact with. These interfaces are designed to represent layers of data in a way that aligns with human perception. As interfaces make data more accessible, they often mask the subjective decisions involved in algorithmic design.

We have grown increasingly dependent on these interfaces for various aspects of daily life, such as using Google Maps for directions and location-based services. In practice, when responding to specific tasks like navigation, Google Maps selectively highlights certain elements — roads, tunnels, and bridges — while filtering out those irrelevant to the task. Numerous decisions are made within the system algorithms before results are presented to users, and while these choices may seem straightforward, some are more complex and can raise challenges.

No model can include all of the real world’s complexity or the nuance of human communication. Inevitably, some important information gets left out (…) A model’s blind spots reflect the judgments and priorities of its creators.

Behavior

The core value of any digital technology lies in the algorithms and data models that underpin it. These components not only define how data is processed and represented but also influence user engagement and experience. However, these underlying algorithms can reinforce systemic biases. Despite the perception of objectivity associated with data, the behavior of algorithms is often shaped by the design and implementation of mathematical models.

In Weapons of Math Destruction, Cathy O’Neil highlights how these mathematical models often reinforce societal inequalities and biases. With the broad integration of data models in education, finance, and law enforcement, nearly every aspect of life is influenced by data-driven systems. In education systems, algorithms used to evaluate teachers’ performance often rely on standardized test scores, and the outcomes more likely reflect socio-economic disparities rather than a teacher’s true effectiveness. As a result, teachers in struggling schools face higher rates of dismissal, perpetuating a cycle of inequality. Similarly, predictive policing models can lead to over-policing in marginalized communities, while credit scoring systems can penalize individuals based on socio-economic factors. These scenarios of data misbehavior demonstrate that the accuracy and reliability of data are highly context-dependent; a failure to account for context in its multiple facets can lead data to amplify inequality rather than mitigate it.

Agency and Interactivity

In applications like environmental observation, data is typically collected passively through mechanical sensors. In contrast, specialized fields such as urban studies employ a broader range of sensing methods that extend beyond passive observation. In the evolving landscape of urban sensing — smart cities being a prominent field — urban life is increasingly shaped by technology that creates a complex network of interactions between the built environment, technology, and inhabitants. Unlike the traditional notion of city, where infrastructure is largely static and unresponsive, smart cities leverage sensing technologies to gather data in real time, enabling responsive actions that impact everything from traffic flows to energy distribution and environmental monitoring.

At the core of this transformation is the concept of agency — the capacity of both human and non-human entities to act, react, and influence outcomes within urban systems. Alternative modes of sensing gather critical information about the underlying dynamics and complexities of urban environments, providing insights into social, economic, and environmental factors that influence urban life. Diverse data sources, such as environmental sensors, social media analytics, and geospatial monitoring, are integrated to facilitate a more comprehensive understanding of urban systems and their intricate interconnections. The data landscape is populated with sensors deployed by various agencies, contributing to an expansive, multi-layered urban dynamics.

Actor

Control Syntax Rio, an exhibition organized by Storefront for Art and Architecture in New York and Het Nieuwe Instituut in Rotterdam, explores the mechanisms of urban control in Rio de Janeiro, revealing underlying conflicts and the complexity of agency within the city’s systems. At the heart of this control network is the Centro de Operações Rio (COR), which operates as both a corrective tool and a command-and-control hub, shaping urban interactions while revealing the underlying power dynamics in the city’s approach to public management. Contrasting “the smooth rationality of urban management that ‘smart city’ rhetoric proclaims,” Rio embodies a more complex reality where control mechanisms exist amid social tensions in contested spaces. The project investigates the critical question of where technology is situated within an existing urban context. By employing surveillance and control as modes of intervention, the system of sensing and control provides perspectives on the nature of the city, which is dynamically shaped by the diverse influences of its inhabitants and their modes of living. The project not only assesses the technical capabilities of urban sensing but also engages with the broader societal impacts, fostering a deeper conversation about the future of urban environments and the role technology plays in shaping them.

Fig. 2 Control Syntax Rio at Storefront for Art and Architecture, 2017.

Engagement

In the project Ghost Cities of China led by MIT Civic Data Lab, researchers examine modes of human habitation within urban environments through the lens of social media engagement. By analyzing geotagged social media posts and user interactions, the team visualizes cities as they are experienced by their inhabitants, highlighting the engagement and interactions that define urban life. The approach of gathering online data from individual users enables researchers to capture real-time information on human presence and activity, uncovering patterns of engagement in areas that might otherwise escape attention. The project assesses urban environments by quantifying their accessibility to amenities, such as schools, malls, grocery stores, and restaurants. This proximity serves as a crucial indicator of habitation, as access to these essential services significantly influences residents’ quality of life and their likelihood of settling in an area. By analyzing the spatial distribution of amenities, researchers can identify underutilized or neglected areas. The project offers a new perspective on understanding urban functionality, addressing the specific issues of real estate overdevelopment, which may signal deeper socio-economic challenges.

World System

Establishing the interconnected and interdependent nature of global socio-economic, political, and environmental dynamics, the concept of the “world system” envisions the planet as a network of information flows. Buckminster Fuller conceptualized it as “Spaceship Earth” in the 1960s, emphasizing the finite resources of the planet and the necessity for humanity to operate collaboratively to sustain these resources responsibly. Over the decades, the concept of “world system” has evolved significantly. Globalization has intensified the interconnectedness of economies and cultures, facilitating rapid exchanges of goods, information, and ideas. Remote sensing has substantially expanded the breadth and depth of planetary data acquisition; integrated with advanced computational analysis and visualization techniques, it enables us to engage with and interpret the intricate dynamics of the world system through various avenues.

Vector

In mathematics, a vector is an object that has both a magnitude and a direction. Vectors are often represented as arrows, where the length of the arrow indicates the vector’s magnitude, and the orientation indicates its direction. They can exist in any number of dimensions, with applications spanning geometry, physics, engineering, and more. Vectors are fundamental in understanding motion, forces, and other directional phenomena in both theoretical and applied contexts. Monitoring systems leverage vector analysis to track and represent the movements of vehicles, vessels, aircraft, and other objects, providing real-time data on their position, speed, and direction across global landscapes. This vector-based tracking is essential in fields like air traffic control, maritime navigation, and autonomous vehicle operations, where precision is critical. By integrating automation and management technologies, these systems enhance monitoring capabilities, allowing for more sophisticated control, prediction, and response to changing conditions.

Fig. 3 Maritime tracking with Automatic Identification System (AIS).

Assemblage

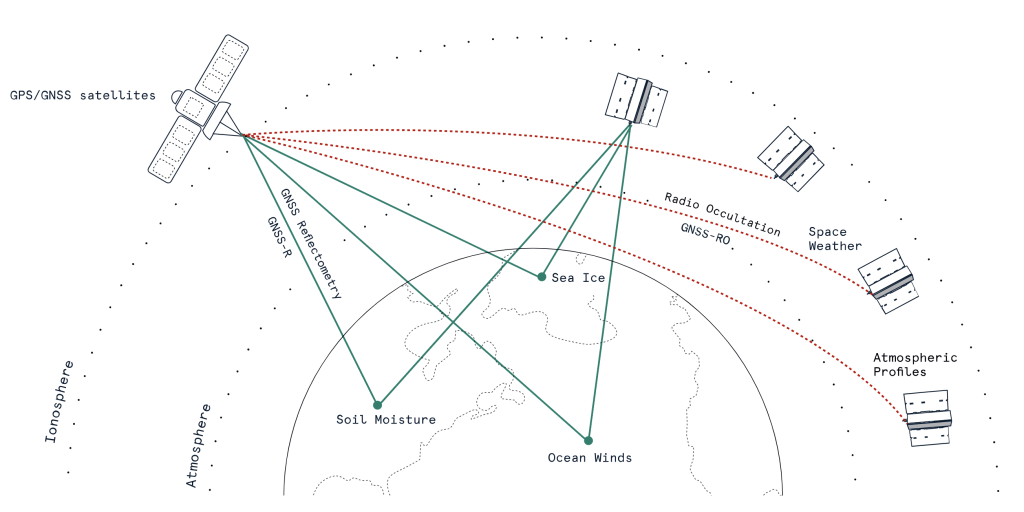

A world system is composed of a multitude of assemblages, each integrating diverse elements into a cohesive, interconnected structure. Planetary sensing technologies, such as radio occultation, exemplify the interconnected nature of assemblages. They integrate a constellation of sensing devices with data processing systems while maintaining closely connected with the atmospheric elements of the planet. Radio occultation functions by measuring the bending of radio signals from satellites as they pass through the Earth’s atmosphere. When a satellite transmits signals that traverse the atmosphere to ground-based receivers, changes in the signal’s phase and frequency indicate variations in atmospheric density and pressure. This data is then processed to derive profiles of temperature, humidity, and pressure at different altitudes. Radio occultation operates on the very principle of synergetics — a way of comprehending complex systems through the interactions among the elements. These interactions are observed via radio waves, which are influenced by the atmospheric conditions they traverse. The data is further spatially contextualized based on geometric relationships, facilitated by geolocation technologies such as Global Positioning System (GPS). This process illustrates how various components within an assemblage synergize to provide comprehensive information. This synergy enhances our observational and analytical capacities in near real-time, ultimately leading to more accurate forecasting.

Fig. 4 Diagram of radio occultation.

Conclusion

Through algorithmic operations and representations, these processes have significantly expanded our capacity for world-sensing. This evolution has progressed from Richardson’s numerical model in the early 20th century to the sophisticated technologies of today, which rely on data collected through a diverse array of sensors and computational algorithms. These advancements illustrate the power of data to inform our understanding of the world, driving society further into a data-driven paradigm.

In metaphorical and metaphysical terms, a world is taking form; the formation is guided by processes of datafication, which encompass the nature and behavior of data, the agency it employs, and the way it represents and transforms the world. From its inception, datafication has been operating as a planetary-scale effort. As experiments in weather forecasting have revealed, the world functions as a system of constant exchange — not only of matter and energy but also of information.

The processes of datafication reveal the intricate relationships between technology and human senses. As a medium, data embodies a specific form of abstraction that is inherently tied to the techniques involved in its collection, representation, and dissemination. This abstraction shapes our understanding and interaction with the world, influencing how we interpret information and construct narratives. As we navigate the data landscape within this ‘world-in-formation,’ we encounter multifaceted realities that prompt us to critically assess the systems we create and the narratives they convey. The potential for digital technologies to both illuminate ad obfuscate human senses necessitates ongoing dialogues about data and agency, as well as the ethical implications of our increasingly data-driven society.

Fig. 1

“Weather Forecasting Factory” by Stephen Conlin, 1986.

“Richardson’s Fantastic Forecast Factory,” European Meteorological Society, n.d., https://www.emetsoc.org/resources/rff/.

Fig. 2

Control Syntax Rio at Storefront for Art and Architecture, 2017.

Storefront for Art and Architecture. “Control Syntax Rio,” n.d. https://storefront.nyc/program/control-syntax-rio/.

Fig. 3

Maritime tracking with Automatic Identification System (AIS) by Spire Global.

European Space Agency. “Spire Products Information.” Earth Online, December 6, 2022. https://earth.esa.int/eogateway/missions/spire/products-information.

Fig. 4

Diagram of radio occultation by Spire Global.

“Ahead of the Curve,” Spire : Global Data and Analytics, February 23, 2024, https://spire.com/blog/weather-climate/ahead-of-the-curve/.

Bibliography

Literature

Allmer, Matt. “Ahead of the Curve.” Spire : Global Data and Analytics, February 23, 2024. https://spire.com/blog/weather-climate/ahead-of-the-curve.

Deleuze, Gilles. Negotiations, 1972-1990. Columbia University Press, 1995.

Edwards, Paul N. A Vast Machine: Computer Models, Climate Data, and the Politics of Global Warming. MIT Press, 2013.

Fuller, R. Buckminster. Operating Manual for Spaceship Earth. Lars Müller Publishers, 2008.

Fuller, R. Buckminster. Synergetics: Explorations in the Geometry of Thinking. Estate of R. Buckminster Fuller, 1982.

Lynch, Peter. The Emergence of Numerical Weather Prediction: Richardson’s Dream. Cambridge University Press, 2006.

Mayer-Schönberger, Viktor, and Kenneth Cukier. Big Data: A Revolution That Will Transform How We Live, Work, and Think. HarperCollins Publishers, 2013.

O’Neil, Cathy. Weapons of Math Destruction: How Big Data Increases Inequality and Threatens Democracy. Crown Publishing Group, 2016.

Richardson, Lewis Fry. Weather Prediction by Numerical Process. Cambridge University Press, 2007.

Weizman, Eyal, and Fazal Sheikh. The Conflict Shoreline: Colonialism as Climate Change. Steidl, 2015.

Media

European Space Agency. “Spire Products Information.” Earth Online, December 6, 2022. https://earth.esa.int/eogateway/missions/spire/products-information.

Fischer, Erica. Locals and Tourists #2 (GTWA #1): New York. n.d. Flickr. https://www.flickr.com/photos/walkingsf/4671594023/in/album-72157624209158632.

Lynch, Peter. “Richardson’s Fantastic Forecast Factory.” European Meteorological Society, n.d. https://www.emetsoc.org/resources/rff.

Storefront for Art and Architecture. “Storefront for Art and Architecture,” n.d. https://storefront.nyc/program/control-syntax-rio.

Spire : Global Data and Analytics. “Ahead of the Curve,” February 23, 2024. https://spire.com/blog/weather-climate/ahead-of-the-curve.